The Doctrine of the Mean: Why "Best Practices" Is a Lie

The next person who tells you to "just follow best practices" owes you an apology. Aristotle figured out why 2,400 years ago.

Quick quiz: How much code coverage should your tests have?

If you answered "100%," you're wrong. If you answered "80%," you're wrong. If you answered "it depends," congratulations—you're thinking like Aristotle.

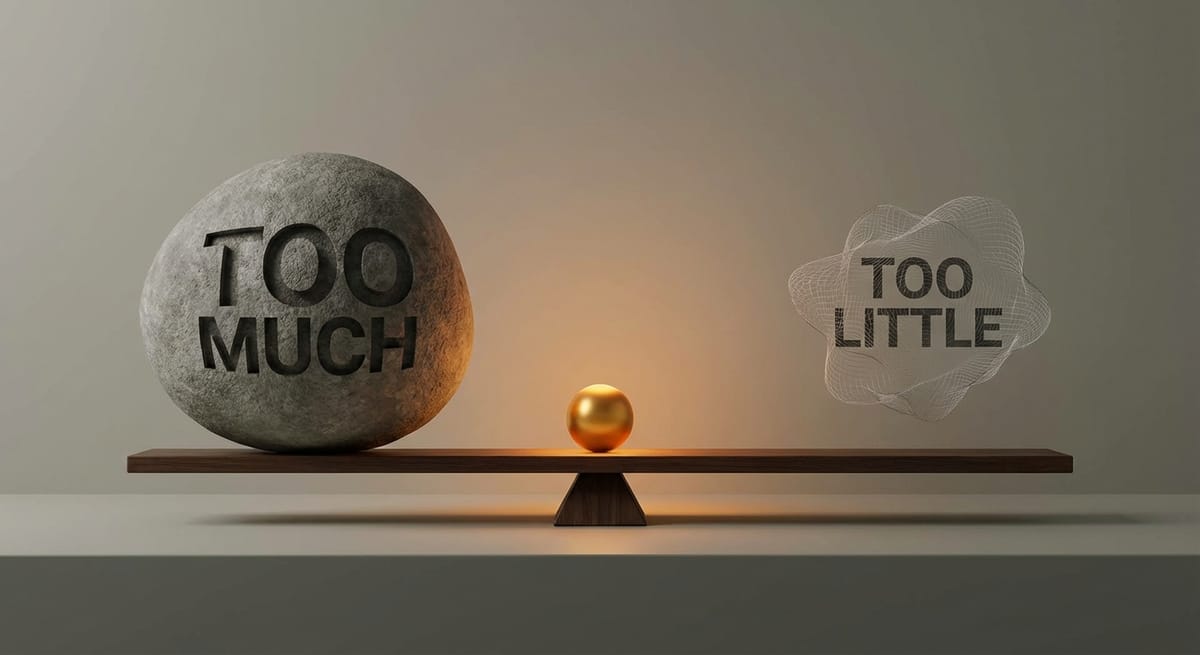

The Mean Is Not the Average

Aristotle's doctrine of the mean is wildly misunderstood. People think it means "do everything in moderation" or "find the average between extremes."

That's not what it means.

The mean is the appropriate response for a specific situation. It's context-dependent, not universal. What's courageous for a soldier is foolhardy for an accountant. What's generous for a billionaire is reckless for a barista.

There's no formula. You can't calculate the mean. You have to develop the judgment to recognize it in each situation.

Now apply this to software development.

The "Best Practice" Problem

"Best practices" assume universality. There's one right way, and everyone should do it. Just follow the checklist.

But the doctrine of the mean says: the right practice depends on context.

Consider code reviews. What's the right level of review rigor?

Too little review (deficiency): Bugs ship. Bad patterns spread. Juniors learn nothing. Technical debt compounds. Too much review (excess): Velocity dies. Reviewers become bottlenecks. Authors feel micromanaged. Innovation stalls because everything requires approval. The mean: Enough review to catch real issues, educate authors, spread knowledge—but not so much that you're bikeshedding variable names while the product stalls.

- Where's that mean? It depends:

- On your team's experience level

- On your system's criticality (medical device vs. internal tool)

- On your current tech debt load

- On your timeline and priorities

- On your code review culture

A startup with three senior engineers shipping an MVP has a different mean than a bank with fifty developers maintaining a core trading system. "Best practices" that ignore this context are worse than useless—they're actively misleading.

The Testing Coverage Trap

Let's revisit the coverage question.

Too little testing (deficiency): Bugs everywhere. Refactoring is terrifying. Every change might break everything. Developers fear the codebase. Too much testing (excess): Test suite takes forever. Tests are brittle and break with every change. Developers spend more time maintaining tests than writing features. Tests don't catch real bugs, just implementation details. The mean: Enough testing to catch real issues, enable confident refactoring, document behavior—but not so much that you're testing getters, setters, and logging statements.

Some teams should have 90% coverage. Some teams should have 50%. Some teams have 95% coverage of the wrong things and 0% coverage of the right things. Coverage percentage tells you almost nothing about whether you've found the mean.

Why We Love "Best Practices" Anyway

So why do we keep reaching for universal rules?

1. Judgment is hard. Rules are easy. "Always write tests first" requires no thought. "Write the tests that provide maximum value for this specific situation" requires deep understanding. 2. Organizational scaling. When you have 500 developers, you can't train everyone to have perfect judgment. Universal rules at least create consistency. 3. Cover-your-ass culture. "I followed best practices" is a defense. "I made a judgment call" is an admission of responsibility. 4. Consultant economics. "It depends" doesn't sell training courses. "Follow these 10 best practices" does.

The tragedy is that "best practices" often become worst practices when applied without judgment. Strict TDD in a two-day hackathon. Comprehensive documentation for a prototype that might be thrown away. Full code review process for a typo fix.

The practices aren't bad. The universal application is bad.

How to Find the Mean

If the mean can't be calculated, how do you find it?

Aristotle's answer: through practice, with guidance from those who've developed good judgment.

You learn to find the mean by:

1. Trying things. Sometimes you'll be too aggressive (excess). Sometimes too conservative (deficiency). The failures teach you where the mean lies.

2. Observing practitioners with good judgment. How do experienced developers decide what to test? How do they balance speed and quality? Their behavior reveals the mean better than any documentation.

3. Reflecting on outcomes. When a decision worked, why? When it failed, why? Build a library of contextual pattern matching.

4. Getting feedback. Others see your blind spots. Code review, mentorship, retrospectives—all help calibrate your judgment toward the mean.

This is why DX teams shouldn't be documentation factories. The job isn't to codify "best practices." The job is to develop organizational judgment—to help teams find their own mean for their own context.

The Uncomfortable Truth

Here's what the doctrine of the mean implies for DX work:

You can't just ship guidelines. Guidelines without judgment create cargo cults. You need guides. People who've developed good judgment must help others develop it too. Context matters more than rules. A DX team that ignores context is actively harmful. Finding the mean is ongoing. As context changes—new team members, new systems, new market pressures—the mean shifts. Yesterday's right answer is today's wrong answer.

The next time someone hands you a "best practices" document, ask: "Best for whom? In what context? According to whose judgment?"

If they can't answer, they're selling you a lie.

Next in series: "Knowing Is Not Becoming: Why Your Documentation Doesn't Work"